Essay : The burden of proof

‘Extraordinary claims require extraordinary evidence’.

The famous astrophysicist Carl Sagan formulated this axiom in reference to UAPs (Unidentified Aerial Phenomena) and possible visits from non-human intelligences. He makes a legitimate demand for scientific rigour, without precisely defining what constitutes extraordinary evidence. However, epistemology teaches us that many major advances are due to open-mindedness and extraordinary curiosity. Exceptional discoveries also require exceptional open-mindedness.

In the first article in this series, we established that the US Department of War (formerly the Department of Defence or DOD) had officially recognised the existence of UAPs in a report by the ODNI (Office of the Director of National Intelligence) published in 2021. This recognition marks a profound break after decades of denial and stigmatisation. This document, entitled Preliminary Assessment: Unidentified Aerial Phenomena, attests that unidentified aerospace phenomena are being observed and taken seriously by official bodies. Their nature or origin, whether human or non-human, remains completely undetermined.

But what exactly needs to be proven?

That the phenomenon does not correspond to any known natural process?

That it stems from technological breakthroughs kept secret by one or more governments?

That it manifests the presence of non-human intelligence on Earth?

Or that there is nothing to explain because it is a cultural or perceptual construct?

The concept of evidence seems self-evident, but it is based on a complex construct. Before even defining the nature of the evidence to be sought, it is necessary to understand what we accept as such and according to what criteria. However, what we consider to be conclusive depends on our perceptive abilities, our epistemological frameworks, our cultural and scientific norms, the technological tools at our disposal, and the current state of our knowledge.

To illustrate this complexity, let us imagine the following scenario. The President of the United States calls an extraordinary press conference and announces that he has evidence that a non-human intelligence is interacting with Earth and its inhabitants. He then presents a video of remarkable quality showing an object with no wings or apparent propulsion system emerging from the ocean and then performing a series of manoeuvres that defy known laws of physics, without emitting any sound. The object then lands on the shore and several clearly non-human beings emerge from it. After the broadcast, he reveals a report from official scientific institutions containing data that supposedly validates his claims.

Would such an event be enough to bring about a paradigm shift? Probably not, and for good reason.

The video could be a fabrication created using artificial intelligence or part of a disinformation campaign. The episode of the alleged weapons of mass destruction in Iraq illustrates how government authorities can present false evidence by relying on the trust placed in their institutions. In 2003, Colin Powell brandished a vial in front of the UN that was supposed to contain Iraqi anthrax, a sample that turned out to be fake, just like the biological weapons it was supposed to prove existed. This manipulation was intended to convince the public and the international community of the need to invade Iraq.

In our fictional illustration, a report bearing the government seal cannot constitute irrefutable proof. Science works by consensus, collective validation and reproducibility. Samples from the device and biological analyses of the occupants, as well as the methods and protocols used, would need to be made available to the global scientific community for independent study. This process of confirmation or invalidation by laboratories around the world could take months or even years.

The only truly acceptable evidence would result from this international scientific endeavour, establishing, for example, that the craft’s materials have isotopic ratios incompatible with all known natural and industrial processes, that its propulsion system defies established physical laws, or that non-human entities have a biochemical or genetic system unknown to science.

This example illustrates the fundamental complexity of the concept of proof when applied to unidentified aerospace phenomena. To understand what our minds accept as valid, however, we must first examine the filters through which we interpret the world.

Our senses, filters of reality

In its common sense, evidence is what establishes truth and confirms that a fact is real. But reality is not a universal absolute. It is subjective and constructed through our limited perceptual tools, whether natural or manufactured. What we call real is therefore intrinsically linked to what we are capable of perceiving.

Animals’ sensory modalities are sometimes radically different from ours. For example, a dog’s sense of smell is up to 100,000 times more sensitive than that of a human. An eagle can spot a rabbit from more than four kilometres away. A shark can detect a drop of blood diluted in the equivalent of a swimming pool.

These beings live in the same physical world as we do, but their perception reconstructs it in ways that produce a fundamentally different reality.

Furthermore, within the same species, realities are not uniform.

Sighted people and those blind from birth do not construct their relationship to space in the same way.

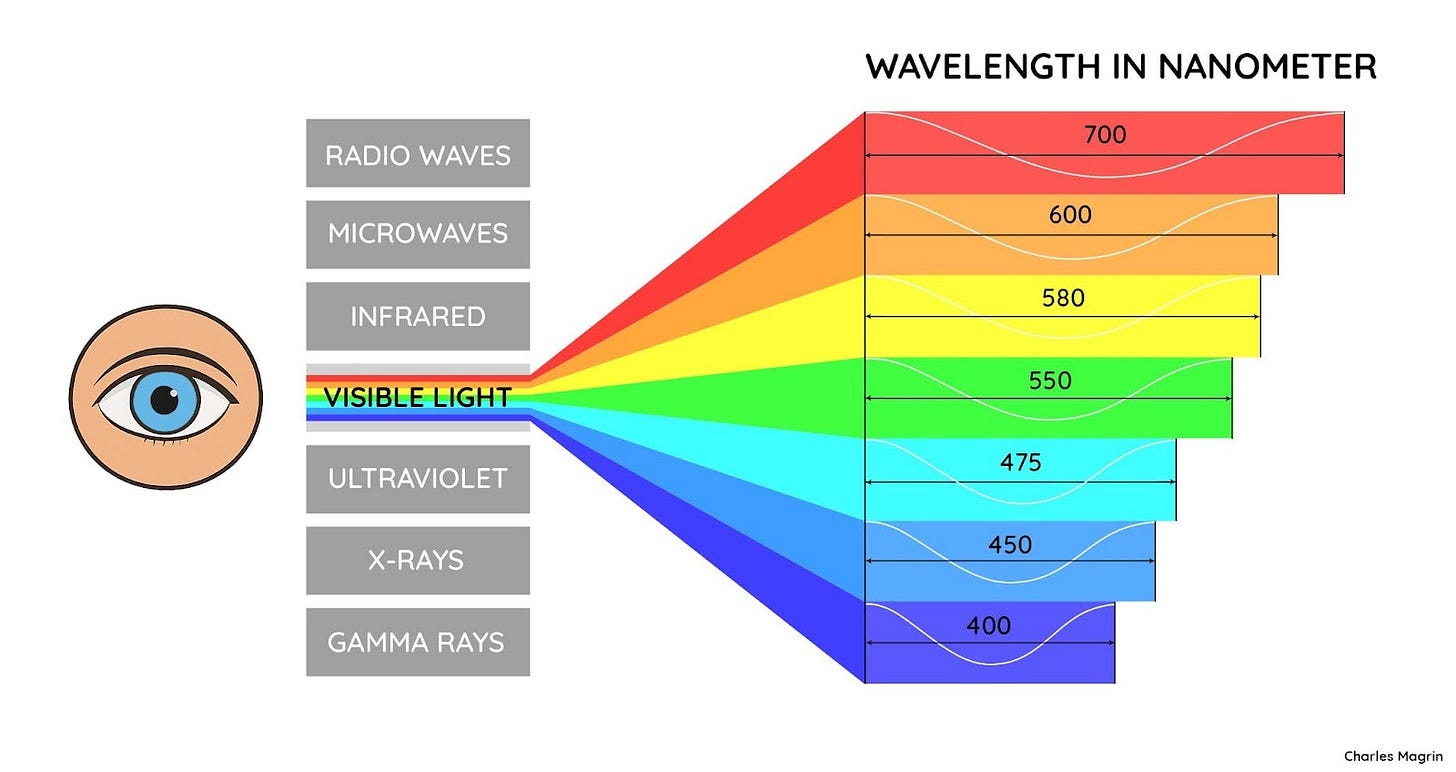

Furthermore, our vision captures only a tiny portion of reality. It is limited to a narrow band of the electromagnetic spectrum, between approximately 400 and 700 nanometres, or less than one billionth of the known spectrum, which ranges from a few picometres (gamma rays) to several kilometres (radio waves). Furthermore, what we call ‘colours’ are merely the result of our brain’s interpretation of different frequencies of the electromagnetic spectrum. Colours are therefore not objective or intrinsic properties of this radiation.

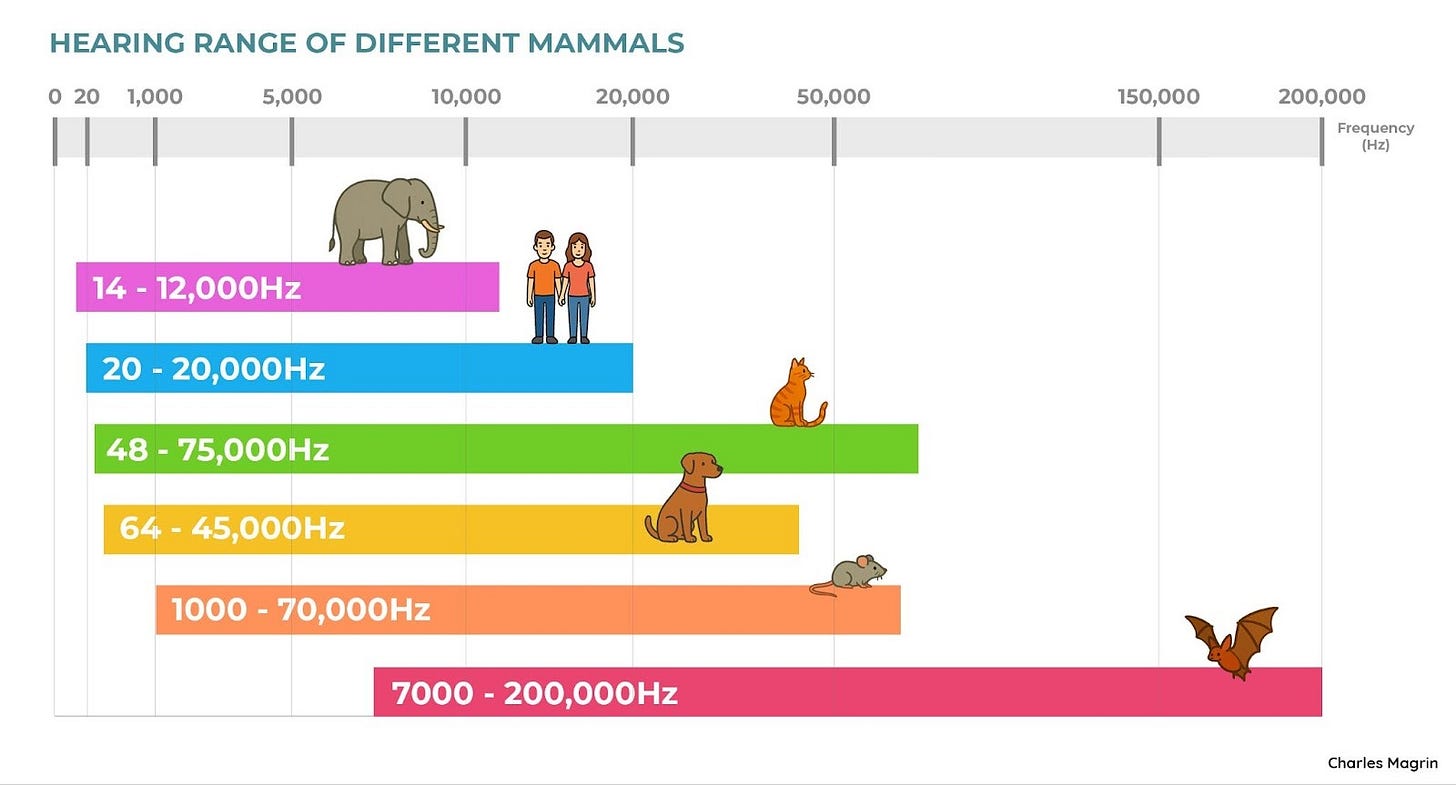

Our hearing, too, is limited to a frequency range between 20 and 20,000 hertz, while the sound spectrum studied by science extends from approximately 1Hz to over 10Ghz, which is about 500,000 times wider than our audible range.

We therefore live among oceans of signals, but our perception only skims the surface.

The brain, architect of our perception

While our senses capture only a fraction of the raw signals present in our environment, what we consciously perceive is even more limited. According to the work of Timothy D. Wilson (2002), our senses send approximately 11 million bits of information per second to our brain, yet our consciousness processes only 40 to 50 bits per second.

This drastic sorting is made possible by an automatic neurological process called sensory filtering. It acts as an internal barrier, inhibiting or reducing stimuli deemed irrelevant in order to preserve our cognitive stability. In other words, 99.9996% of the information captured is discarded and only 0.0004% reaches our consciousness.

Imagine a huge library where 11 million new books arrive every second and where the sole super-librarian can only sort about 40 at a time!

The role of selective attention in the subjective experience of reality

Sensory filtering is only one of the first steps in information processing. Once filtered, the retained signals are organised by the brain into a coherent and usable representation. This process is dynamic and guided by several cognitive factors.

Attention plays a central role in our perception of the world. It acts as an active filter, guiding what we notice and what we ignore, often without our being aware of it. This process is influenced by several variables: our expectations guide our vigilance, our emotions amplify certain signals, our culture shapes what we pay attention to, and the immediate context constantly modulates our field of perception.

To gain a concrete understanding of this, a famous cognitive psychology experiment offers a small observation challenge. It takes less than two minutes. Watch it before reading on.

THE MONKEY BUSINESS ILLUSION

If you took part in the experiment, you may have been surprised by what you didn’t see. This demonstration powerfully illustrates the fact that our attention profoundly structures our conscious perception but also limits its scope. What we see can therefore be very different from what actually happened.

Bayesian perception: when the brain anticipates the world

Perception is based in particular on a predictive mechanism. The brain does not simply analyse what comes from the senses, it anticipates. It constantly generates hypotheses about what we are going to perceive, then adjusts these predictions in light of the errors between what was expected and what is perceived. This model, often referred to as Bayesian, is now widely accepted in cognitive science, although some aspects of its scope or mechanisms are still debated.

This process allows us to act quickly in a complex environment, but it can also lead to illusions, distortions or misbeliefs when the signal is ambiguous, unexpected or insufficient.

Cognitive biases, distortions and collective illusions

Many cognitive processes can also influence how we perceive, remember and interpret events. Research in cognitive psychology has identified numerous cognitive biases that systematically affect our judgements and perceptions. The sheer number of these biases demonstrates the extent to which our minds distort, filter or reinterpret reality. The following list is not exhaustive, but illustrates some of the most well-documented biases and phenomena, the effects of which can combine or even amplify each other.

Confirmation bias

The tendency to favour information that confirms our prior beliefs, while ignoring or discrediting information that contradicts them.

Example: an observer convinced that UFOs are of non-human origin may interpret any unusual light as evidence to support this, even in the absence of objective correlates.

Illusory truth effect

A statement that is repeated frequently, particularly in the media or on social networks, tends to be perceived as more credible, even if it is factually incorrect.

Example: The idea that we only use 10% of our brain, repeated in numerous films and articles, is widely accepted despite being scientifically unfounded.

Pareidolia

Our brain constantly searches for familiar shapes in ambiguous or incomplete images. This ability to recognise shapes quickly is undoubtedly an adaptive trait, but it can also lead us astray. Neuroscience shows that our brains are particularly adept at detecting faces, a crucial ability for human survival and socialisation. This evolutionary predisposition explains our tendency to recognise faces in ambiguous shapes, favouring error by excess rather than by default.

A classic example is perceiving a silhouette in a cloud, or a face on the surface of Mars – such as the famous ‘face of Cydonia’ identified by the Viking probe in 1976, which turned out to be a simple rock formation under a particular lighting condition.

One example illustrates this perfectly. In May 2025, during a public conference in Washington, Luis Elizondo presented an aerial photo showing a huge circular object. In less than 24 hours, this image was identified as simply a view of agricultural irrigation circles.

In what appears to be an attempt to raise awareness among decision-makers and open up the debate to as many people as possible, this unverified dissemination – as Elizondo himself admits – risks having the opposite effect. By weakening the credibility of the message, it provides easy ammunition for those seeking to discredit the entirety of the work presented at the event.

Memory distortion and social contagion

Memory also plays a role in the subjective construction of reality. Far from faithfully recording the past, it reconstructs it each time it is recalled. This process is influenced by context, emotions, expectations, and information that has come to light after the event. Thus, what we believe we remember may be an adjusted and sometimes distorted version of the initial experience.

Example: witnesses to the same event may, after discussing it among themselves, find that their memories align with a dominant version, even if it is incorrect.

Furthermore, as researcher Diana Pasulka shows in her book American Cosmic, media narratives, religious beliefs, popular culture, and digital technologies shape a cognitive environment where the boundary between fact, interpretation, and projection becomes blurred. Collective belief is then imprinted on individual memory as a sincere but reconstructed memory.

In order to ensure our survival, we do not need to perceive all aspects of our environment, but only what is sufficient to guide our actions. This is the price of efficiency. What we call reality is, therefore, the result of a gradual transformation, brought about by our filters, our biases, our culture, our reconstructed memories and our emotions. The product of this mechanism is an illusory truth, shaped to be coherent and functional.

A crisis of visual evidence

We have seen how strongly each person’s perception of the world is filtered, biased, and reconstructed by nature. Added to these cognitive vulnerabilities is now a technological threat. In a world saturated with images, visual evidence, once central, has lost its demonstrative value. In the age of artificial intelligence, it has become possible to generate credible videos, voices or faces in a matter of seconds. Deepfakes are no longer a technical curiosity, but a source of constant suspicion. A video can now be authenticated by an authority and yet still be considered suspicious, simply because the human eye is no longer sufficient to decide. Visual evidence has become structurally vulnerable; images alone no longer certify anything.

In the contemporary study of unidentified aerospace phenomena, this reality imposes a new requirement. All visual recordings must now be supported by converging data from other sources that are less prone to manipulation or ambiguity.

When our flaws become targets: towards the strategic exploitation of cognitive biases

While these biases and perceptual distortions are part of normal cognitive functioning, they can also be deliberately exploited. The psychological mechanisms that shape our individual reality are also powerful levers for guiding collective perception.

Government agencies have sought to systematically exploit these cognitive vulnerabilities. The MK-Ultra project, conducted by the CIA from 1953 to 1973 and revealed during the Church Committee hearings in 1975, was specifically aimed at developing techniques of psychological manipulation. Using drugs, sensory deprivation and various forms of psychological trauma, this programme illustrates how the agency attempted to exploit the flaws of the human psyche for strategic purposes. The official existence of units dedicated to psychological operations within the Pentagon shows that this approach remains relevant today.

This exploitation is based on a detailed understanding of attentional dynamics, shared beliefs and media virality circuits. Once these mechanisms are at work, identifying what is informative or misleading requires constant vigilance, as the truth always seems to slip between the lines.

From methodical scepticism to scientific dogma

The cognitive biases we have just explored reveal the fragility of our perceptual and interpretative processes. Faced with these limitations, scepticism is a cornerstone of the scientific method, heping to guard against these pitfalls. However, this mindset can be expressed either constructively or dogmatically, strengthening our approach to knowledge or hindering it, depending on how it is applied.

Scientific scepticism proceeds by methodical doubt. Rather than accepting or rejecting on principle, it allows us to temporarily suspend judgement while we examine the available data. This stance promotes a rigorous evaluation of the facts and maintains the openness necessary to revise our conclusions.

Among the sceptic’s tools is Ockham’s razor, the principle that the simplest explanation is generally preferable – but, crucially, only if it accounts for all the observations. This principle is not absolute, as science sometimes accepts complex explanations when simplicity is not sufficient to explain the observed phenomena. Quantum mechanics and general relativity, for example, are complex theories that have become accepted because they explain the data better than the simpler models that preceded them.

Scepticism and confirmation bias

The analysis of the Aguadilla case by the AARO (All-domain Anomaly Resolution Office), the US Department of Defence agency responsible for investigating UAPs, illustrates a dogmatic drift towards scepticism. Faced with an infrared video showing an object behaving in a seemingly inexplicable manner, the AARO’s official report immediately concludes that it is probably ‘sky lanterns’. This approach reverses the scientific method by posing the conclusion before the analysis.

The AARO presents no evaluation grid, no hypothesis testing protocol, and no criteria for ranking possible explanations. This opacity makes their analysis non-reproducible, non-verifiable and therefore unfalsifiable, which is the antithesis of the scientific approach.

A rigorous approach would consist of first establishing robust data, then systematically testing each prosaic hypothesis against this information, retaining the one that explains the greatest proportion of observations. In the case of Aguadilla, this method reveals that the lantern hypothesis does not adequately explain the radar echoes detected, the absence of a mass release, despite being described as ‘common practice’, and the number of objects observed. Beyond these factual contradictions, the report presents another methodological paradox: how can a ‘case be declared solved on 20 March 2025’ while admitting ‘moderate confidence’ in this resolution?

Another striking example concerns debunker Mick West‘s analysis of videos released between 2007 and 2017 by former senior officials at the Pentagon. These videos show objects filmed by US Navy fighter jets in the FLIR1, GIMBAL and GOFAST sequences, objects that the Pentagon has officially declared to remain unidentified. West offers conventional explanations, attributing these phenomena respectively to a distant aircraft, an aircraft whose apparent rotation is an artifact of the camera, and a weather balloon. Faced with detailed testimonies from the pilots and radar operators involved, West simply dismisses them, stating that ‘eyewitness accounts are very difficult to analyse because they are subject to observation and memory errors’, preferring to focus solely on the videos. These conclusions imply that highly trained fighter pilots, experienced radar operators, and the entire naval intelligence staff collectively mistook mundane objects for phenomena extraordinary enough for the Pentagon to officially classify them as unidentified.

These examples reveal a common drift in the application of scientific scepticism. Rather than proceeding with a methodical examination of all available data, the AARO and Mick West favour conventional explanations at the expense of analytical rigour. The AARO illustrates a flagrant lack of ethics by beginning its official report with its conclusion and assigning the case the status of ‘resolved’ while acknowledging that it has moderate confidence in its conclusion, which is an apparent contradiction. Mick West reveals a systemic confirmation bias, carefully selecting data that supports his mundane conclusions while discarding those that contradict them. In both cases, the analytical process is reversed: instead of examining all the data to deduce the most coherent explanation, one starts with a pre-established hypothesis that one then strives to validate.

This drift shows how scepticism can turn into dogma when it serves more to confirm preconceptions than to rigorously examine the available data. A genuine scientific approach would instead require honestly acknowledging the limitations of our current explanations in the face of data that does not fully conform to them.

These considerations lead us to a more fundamental question about the nature of scientific knowledge itself. How can we establish what constitutes valid knowledge? This question involves understanding the evolving and provisional nature of scientific truth.

Epistemology & truth

Even when they are scientific, our tools are not integral transmitters of reality. Objectivity in science is based on protocols, thresholds, and interpretations. It is not a complete reflection of reality, but a rigorous framework, constructed to approximate a coherent, reproducible, and always provisional representation of it, in order to establish the best description available at a given moment.

The history of science is full of examples of the impermanence of scientific truth. The concept of the atom, imagined since ancient times as an indivisible unit of matter, has evolved profoundly over the course of discoveries. Newtonian gravity, long considered universal, was supplemented by general relativity, necessary to describe phenomena under extreme conditions. Even Einstein was confronted with the evolving nature of science. Convinced that the universe must be static, he introduced a constant into his equations to reconcile reality with his belief. However, Edwin Hubble’s observations showed that the universe was expanding. The constant was then abandoned.

It was reintroduced, however, in 1998 to explain the acceleration of this expansion. It is now attributed to what is known as dark energy, a hypothetical force of a completely unknown nature, which is thought to make up nearly 70% of the universe. Alongside it, dark matter, postulated to explain the gravitational cohesion of galaxies, is thought to represent about 25%. Our knowledge of the world therefore rests on a gaping margin, since 95% of reality is only assumed according to the standard cosmological model, as long as it is not refuted or supplemented.

This figure does not mark a limit however. It opens up a vast space, ripe for exploration. What we do not yet know is not a void, but a field of opportunities to broaden our horizons, question our certainties, and welcome the unexpected. Certain phenomena or objects, such as those now grouped under the term UAP, arise precisely in these still-unclear areas, at the frontiers of our understanding.

Serendipity and intuition

Some of the greatest scientific advances did not arise from a planned protocol, but from a mixture of curiosity and openness to the unexpected.

Two phenomena stand out: intuition and serendipity.

The intuition referred to here is a subconscious cognitive process based on acquired expertise, not to be confused with the concept of intuition as described in philosophy or metaphysics. Serendipity is the transformation of a chance observation into a significant discovery.

The following examples remind us that the construction of knowledge occasionally manifests itself through unexpected detours.

Examples of discoveries guided by intuition

Albert Einstein and the special theory of relativity (1905)

By imagining himself chasing a ray of light, Einstein challenged the classical conception of time and space. This intuition, born from a simple mental image, led him to formulate one of the most revolutionary theories in modern physics.

Watson and Crick and the structure of DNA (1953)

Watson and Crick proposed the double helix structure of DNA by combining existing data, chemical rules and strong structural intuition. Their hypothesis, formulated before any experimental confirmation, was subsequently validated and became a cornerstone of modern biology.

Alfred Wegener and continental drift (1912)

Observing the correspondence between the coastlines of the continents and the geological and fossil similarities on either side of the oceans, Alfred Wegener developed the theory of continental drift in 1912. Intuitively convinced that the continental masses had once been joined together, he put forward this hypothesis without being able to demonstrate the physical mechanisms involved. Rejected by most of his contemporaries due to a lack of tangible evidence, his theory was nevertheless confirmed nearly fifty years later with the emergence of plate tectonics, becoming a pillar of modern geology.

Examples of discoveries resulting from serendipity

Alexander Fleming and penicillin (1928)

While studying the growth of staphylococci, Alexander Fleming noticed that mould had prevented their development on a forgotten culture. He was not looking for an antibiotic, but recognised the importance of this anomaly, thus discovering penicillin, a major breakthrough that would revolutionise medicine.

Penzias and Wilson and the cosmic microwave background (1965)

While attempting to eliminate interference on a radio antenna, the two engineers accidentally discovered the fossil radiation of the universe, providing experimental confirmation of the Big Bang.

Wilhelm Röntgen and X-rays (1895)

While working on cathode ray tubes, Wilhelm Röntgen observed a strange glow on a fluorescent screen. He deduced the existence of invisible radiation, which he named X-rays, and which would become the basis of medical imaging.

Henri Becquerel and radioactivity (1896)

Henri Becquerel was trying to reproduce X-ray emissions from uranium salts exposed to light. He discovered that photographic plates were altered even without exposure, thus highlighting radioactivity.

Percy Spencer and the microwave oven (1945)

While working on a magnetron for radar, Percy Spencer noticed that a chocolate bar was melting in his pocket. This phenomenon led him to explore the culinary potential of microwaves, resulting in the invention of the first oven of its kind.

These examples show that open-mindedness, whether it takes the form of accurate intuition or attention to the unexpected, can play a decisive role in the emergence of new knowledge.

What evidence, for what phenomenon?

Given the complexity of studying UAPs, where manifestations and effects seem to defy conventional categories, it is reasonable to think that the most fruitful advances would not come from a single field, but perhaps from the convergence of several disciplinary spheres. A holistic approach, integrating the hard sciences, humanities and medicine, could pave the way for a more complete understanding of the phenomenon, and even explanations as to its nature.

This need for a shared framework is also highlighted in the Sky Canada report published in 2025 by the Office of the Chief Science Advisor of Canada, which calls for interdisciplinary and international collaboration based on rigorous analysis protocols. In this spirit, the model proposed by Jacques Vallée and Eric Davis, used in particular in the AAWSAP-BAASS programme, offers a six-layer classification method for studying the phenomenon according to six complementary dimensions:

the physical layer, which includes material and measurable data (ground traces, electromagnetic disturbances, light emissions, etc.);

the anti-physical layer, which includes aspects reported as contradicting the known laws of physics (changes in form, instantaneous disappearance, missing time, etc.);

the psychological layer, which focuses on how witnesses perceive, interpret and react to the event;

the physiological layer, which concerns the sensory or biological effects experienced by witnesses during or after the experience, as well as the results of associated medical analyses;

the psychic layer, which deals with interactions involving consciousness, dreams or extrasensory effects;

the cultural layer, which analyses the integration of the phenomenon into a society’s narratives, beliefs, media or religious frameworks.

This model has the advantage of not excluding one category of data in favour of another, but instead considers them as an articulated whole.

It provides a multidimensional analytical framework, allowing heterogeneous evidence to be organised into complementary strata and the complexity of the phenomenon to be better understood.

However, in a field that is still in its infancy, this structure could offer much more than a simple classification framework. It could become the necessary foundation for establishing shared standards among teams and institutions engaged in the study of the phenomenon. This is because scientific consensus, the condition for the admission of a fact into the collective knowledge base, is based above all on the reproducibility of results: an observation or hypothesis is only valid if it can be reproduced, validated or invalidated by others.

This last point is fundamental. In science, an idea is robust not because it is beyond criticism, but because it can be tested, confirmed or refuted. If a hypothesis cannot be tested, it cannot be validated or invalidated. For example, the statement ‘there is an invisible entity that controls everything but cannot be seen or measured’ is neither measurable nor falsifiable and is therefore not scientific. Not because it is false, but because it is unverifiable. Yet a paradigm shift requires scientific validation in order to be accepted and become a reality.

Conversely, the following hypothesis: ‘There are objects whose trajectories exhibit instantaneous accelerations incompatible with the current laws of physics’ has potential scientific value precisely because it is testable.

The Vallée-Davis 6-layer model, with its interdisciplinary design and modular logic, provides a common foundation for the rigorous study of the phenomenon. It does not define a method per se, but provides a shared analytical framework capable of organising data into coherent categories. It allows for the collection of comparable observations in harmonised formats, paves the way for cross-disciplinary meta-analyses, and creates a shared language between disciplines. This interoperability is essential to overcome the current isolation of approaches, which remain too compartmentalised in this field of study. It would allow us to move from an accumulation of disparate data to a cumulative approach capable of generating a true body of knowledge.

However, such a convergence effort can be difficult to implement. Even though the scientific method is based on universal principles, practices differ significantly depending on cultural, institutional or technical contexts. Harmonising methods, formats and tools will require a collective commitment. This prize is one of shared progress. History shows that serious errors can result from the absence of a common reference framework.

In 1999, NASA’s Mars Climate Orbiter probe was lost due to a simple conversion error between measurement systems. The Lockheed Martin team that built the probe used the imperial system, while the Jet Propulsion Laboratory team responsible for navigation used the metric system. Methodological coordination based on clear standards is therefore a prerequisite for any significant progress in a field as complex as the one discussed in this article.

From data to collective responsibility

This need for shared standards is in line with a methodological reflection developed by Garry Nolan, an internationally renowned immunologist, professor of pathology at Stanford and prolific inventor. co-author of the scientific report ‘The New Science of UAP’ and advisor to the Senate and House of Representatives intelligence committees. In 2013, he was contacted by a former CIA officer and an aerospace executive. His mission was to analyse medical problems affecting military and diplomatic personnel - cases that would later prove to be among the first of the Havana syndrome, officially recognised since 2021 by the US government, which now compensates victims.

During a conference presentation at the Archives of the Impossible symposium held at Rice University in April 2025, Dr. Nolan developed his scientific vision for the study of UAPs. He established a fundamental distinction between three levels of progression from data to knowledge. Raw data corresponds to direct, unprocessed observations, such as radar measurements or eyewitness accounts. This data constitutes the raw material, but remains meaningless until it is contextualised. Evidence represents the same data once it has been interpreted within the framework of a hypothesis and a rigorous methodology. Finally, conclusions correspond to the explanatory interpretations that can be drawn from this evidence after study and analysis.

This distinction is crucial, because it is very easy to refute a conclusion, but much more difficult to challenge verifiable data and a reproducible methodology. As Jacques Vallée taught Nolan, who considers Vallée his mentor: ‘it’s the data, not the conclusion’. The primary objective is not to validate a particular hypothesis, but to establish the reliability of the data collected. According to Nolan, if he can convince another scientist that the data is authentic and the methodology rigorous, then the responsibility for interpretation becomes collective.

This perspective also clarifies the role of peer review. Contrary to popular belief, this process is not intended to validate the conclusions of a study, but to ensure methodological validity. According to Nolan, the important thing is that the method of analysis is recognised as rigorous, thus allowing the conclusions to be considered acceptable in the established scientific context.

On the other hand, in response to critics who claim that there is no evidence, Nolan offers a direct response: ‘What research have you done yourself?’ This question often reveals that those who deny the existence of evidence have in fact conducted no personal investigation. For Nolan, there is a great deal of evidence that is widely available, but it is obviously not irrefutable proof.

In his view, absolute proof is rare in science and only really works in mathematics. We could add the legal field, where material evidence such as DNA can irrefutably establish a person’s presence at a crime scene. In the experimental sciences, however, the rarity of absolute proof stems from the very nature of scientific knowledge, which is essentially evolutionary and provisional.

This approach recalls the very foundations of the scientific method, designed to avoid confirmation bias and hasty conclusions. In the study of UFOs, where the extraordinary nature of the reported phenomena can lead to the premature rejection of data due to a refusal to accept their potential implications, Nolan simply reaffirms these basic principles that some closed-minded academics seem to have forgotten. This approach perfectly embodies the spirit of the Vallée-Davis model, which proposes methodically organising heterogeneous data without prejudging their final interpretation.

A concrete application: material analysis

This methodological approach finds direct application in Nolan’s work on the analysis of materials of presumed non-human origin. Using advanced atomic tomography instruments co-developed by her team at Stanford (MIBI-TOF), he examines samples such as those from the Ubatuba case (1957, Brazil), where fishermen observed a flying disc explode over the ocean before recovering fragments of 99.99% pure magnesium. According to him, this material evidence constitutes disturbing clues, particularly the pure magnesium found on the Brazilian coast in the 1950s, a material of exclusively artificial origin whose presence in that place and at that time remains highly improbable. True to Vallée’s teachings, Nolan refrains from giving definitive conclusions about the origin of these materials. However, the interpretation of these results must take into account the fact that the analytical technologies used, although proven in the biomedical field, are being applied here in a new context of aerospace forensics. As illustrated by their own analysis of the Council Bluffs case, even with advanced analytical techniques and samples recovered immediately after the event, definitive interpretation remains difficult. This extension of use will require specific validation and replication of results by independent teams using complementary methods.Testimonies: a basis for researchTestimony is recognised as a form of evidence in law. In the case of UAP studies, while it does not constitute scientific validation, it remains a valuable piece of evidence that can guide analysis, formulate hypotheses and highlight areas worthy of further exploration.This perspective invites us to reconsider the vast body of existing testimonials. For example, the Mutual UFO Network (MUFON) has a database of more than 140,000 cases documented since 1969, while the National UFO Reporting Centre (NUFORC) has recorded 180,000 cases since 1974. Too often ignored, marginalised or ridiculed, these accounts nevertheless constitute a wealth of raw material. However, this stigmatisation is not without consequence. It probably contributes to the reluctance of certain academic circles to engage in the study of these phenomena.

The case of psychiatrist John E. Mack is emblematic. A professor at Harvard Medical School and winner of the Pulitzer Prize, he studied accounts of possible close encounters and abductions by non-human entities. Unsurprisingly, his work sparked heated controversy, leading to an internal investigation by the university. Although he retained his position, this case illustrates the difficulty, even for established researchers, of exploring subjects outside the dominant paradigms without risking their reputation.

Testimonies: A Foundation for Research

Testimony is recognized as a form of evidence in legal contexts. In the study of UAP, while it does not constitute scientific validation, it remains a valuable source that helps guide analysis, formulate hypotheses, and identify what deserves further investigation.

This perspective invites a reconsideration of the vast body of existing testimonies. For example, the Mutual UFO Network (MUFON) maintains a database of more than 140,000 documented cases since 1969, while the National UFO Reporting Center (NUFORC) has recorded 180,000 since 1974. Too often ignored, marginalized, or ridiculed, these accounts nevertheless represent a rich body of raw material. However, this stigmatization is not without consequences. It likely contributes to the reluctance of certain academic circles to engage in the study of these phenomena.

The case of psychiatrist John E. Mack is emblematic. A professor at Harvard Medical School and a Pulitzer Prize laureate, he studied reports of alleged close encounters and abductions involving non-human entities. Unsurprisingly, his work sparked significant controversy, even leading to an internal investigation by the university. Although he retained his position, this case illustrates the difficulty, even for established researchers, of exploring subjects at the margins of dominant paradigms without risking their reputation.

Mass sightings and persistent anomalies

One particular category of testimony deserves closer attention: mass sightings. These events sometimes involve dozens or even thousands of witnesses describing the sighting of one or more UAPs.

On 27 October 1954 in Florence, thousands of spectators at a football match at the Artemio Franchi stadium witnessed an object moving slowly above the stadium. Intriguingly, some witnesses reported a cigar shape, while others claimed it was egg-shaped. Its strangeness led the players to interrupt the game, mesmerised by what they were seeing. In addition, a white, filamentous substance, nicknamed ‘angel hair’, fell from the sky during the sighting. This material was analysed and found to contain boron, silicon, calcium and magnesium, with no conclusive explanation as to its origin.

In 1966, in Westall, Australia, several hundred pupils and teachers observed an unconventional flying object land and then take off again.

In 1994, at Ariel School in Ruwa, Zimbabwe, 62 children reported seeing a craft on the ground and humanoid beings who communicated telepathically with some of the children. This sighting was analysed psychologically by Dr Mack, who concluded that the children were sincere and emotionally affected. According to him, there was no doubt about the reality of their experience, with no signs of collective fabrication.

On 13 March 1997, tens of thousands of witnesses across Arizona and Nevada observed what has become one of the most documented UFO events in modern history, known as the ‘Phoenix lights’. Between 7:30 and 8:45 p.m., a V-shaped formation of lights with an estimated wingspan of more than a kilometre crossed the sky, followed by stationary orbs. Among the first witnesses was actor Kurt Russell, a private pilot, who reported the lights to air traffic control as he approached Phoenix. Arizona Governor Fife Symington, a former Air Force captain and pilot, initially dismissed the event at a press conference in June 1997, before admitting ten years later that he himself had observed a craft that he described as ‘otherworldly’. The explanation attributing the sightings to military flares remains disputed.

These massive testimonies are often dismissed as ‘collective hallucinations,’ but this hypothesis, although long invoked, is not based on any scientific evidence. No empirical work has documented the existence of an identical shared sensory perception of a non-existent object, despite more than a century of extensive research on hallucinations.

Cases often cited as ‘collective hallucinations’ are in fact distinct phenomena such as epidemic hysteria (or mass psychogenic illness), characterised by the spread of physical symptoms such as nausea, headaches or fainting, through emotional contagion, without any identifiable organic cause. These phenomena differ fundamentally from stable, detailed and consistent visual observations reported retrospectively by independent witnesses who do not know each other.

Analysed with contemporary tools, however, the testimonies could constitute a fertile heuristic basis. Artificial intelligence, for example, could reveal patterns or statistical regularities from databases of testimonies, an approach developed by Jacques Vallée as early as the 1960s.

Whistleblowers and classification: when evidence remains inaccessible

Witnesses from the military and intelligence spheres occupy a unique place in this data corpus because their institutional status lends particular credibility to their extraordinary revelations.

The nature of the allegations varies according to their exposure to the phenomenon. Some claim to have had direct access to recovery and reverse engineering programmes, commonly known as ‘legacy programmes’, while others are said to have obtained second-hand information by consulting classified documents, interviewing witnesses, or accessing briefings in the course of their duties. This position creates the same paradox for all of them, where access to sensitive evidence is accompanied by the impossibility of sharing it in its entirety.

David Grusch, the emblematic case

David Grusch perfectly illustrates this problem. A decorated former intelligence officer and Afghanistan veteran, he served fourteen years in the US Air Force, where he rose to the rank of Major. From 2019 to 2021, he was a member of the UAPTF (Unidentified Aerial Phenomena Task Force) as a representative of the National Reconnaissance Office (NRO), working in the operations centre on the director’s briefing staff, which included coordinating the Presidential Daily Briefing sent to the White House. From 2021 to 2023, as a civilian, he held a GS-15 position (equivalent to the rank of colonel) at the National Geospatial-Intelligence Agency (NGA), where he was his agency’s co-lead for UAP analysis.

Due to his high-level positions, Grusch enjoyed exceptional accreditation. This position gave him access to ‘literally every relevant compartment’ and, in his own words, constituted ‘a function involving absolute trust’ in his military and civilian capabilities. In 2019, as part of a congressional mandate, the director of the UAPTF entrusted him with a crucial mission: to identify all special access programmes and controlled access programmes (SAP/CAP) in operation within the Department of War. As part of this official investigation, he reportedly interviewed some 40 first-hand witnesses over a period of four years.

These witnesses allegedly revealed to him the existence of clandestine recoveries of craft and what he describes as biological materials of non-human origin. These operations are said to be part of secret recovery and reverse engineering programmes spanning several decades.

Even more seriously, Grusch reportedly discovered that these programmes were not subject to congressional oversight. Although the relevant authorities may waive congressional oversight for certain sensitive special access programmes (waived SAPs), the chairmen and minority members of the Armed Services and Appropriations committees of Congress must at least be kept informed. According to Grusch, this was not the case for these secret projects, confirming their illegality under the fundamental rules of the American democratic system, which requires all government programmes to be subject to congressional oversight. Despite his high level of security clearance, Grusch was reportedly denied access to the programmes he had identified. Faced with these obstacles and the pressure he was under, he first filed a complaint with the Inspector General of the Intelligence Community (ICIG) in May 2022. In July 2022, the ICIG described David Grusch’s allegations as ‘credible and urgent’, an assessment that greatly strengthens the plausibility of his claims. He then chose to resign in 2023 so that he could speak publicly as a whistleblower. On 26 July 2023, he testified under oath at a public hearing before the House of Representatives Subcommittee on National Security, believing that such revelations should be brought to the attention of the public.

The constraints of the DOPSR process

In order to publicly disclose some of these explosive allegations while ensuring that he would not be prosecuted for divulging sensitive information, Grusch had to submit his statements to the DOPSR (Defence Office of Prepublication and Security Review) process. This office reviews all material that former military or intelligence personnel wish to publish to ensure that no classified information is disclosed to the public and, by extension, to the United States’ adversaries. The aim is to protect US intelligence sources, methods and capabilities, as well as strategic technologies that could confer a military advantage.

This mandatory procedure applies to all former personnel who had access to classified information, even after the end of their service.

In the case of whistleblowers such as Grusch, they can only transmit their classified information to individuals with the same level of clearance and only in a SCIF (Sensitive Compartmented Information Facility). These secure spaces, specially designed for the exchange of sensitive information, are isolated from any electronic surveillance and equipped with protections against espionage.

This security architecture poses a major challenge for any public verification. Grusch claims to be able to indicate the precise locations where objects of non-human origin are stored, but can only provide proof by complying with strictly regulated protocols. His claims thus escape traditional citizen and scientific scrutiny. To date, the DOPSR has still not granted him permission to publicly reveal what he considers to be his first-hand witness status, thus maintaining the mystery surrounding the basis for this status he claims to have.

Tensions between secrecy and transparency

This security architecture poses challenges that go far beyond simple public verification. Grusch’s journey illustrates the personal risks incurred by these witnesses. Following his revelations, he claimed to have suffered reprisals: leakage of his medical records, threats and even the loss of certain veteran rights. This dissuasive climate explains the reluctance of other potential witnesses, who prefer to testify behind closed doors or remain silent.

Jacob Barber, a former special forces soldier who became a private contractor for companies in the American military-industrial complex, claims to have been involved in programmes to recover non-human craft. His testimony reveals a striking paradox: when he sought protection from senior officials, he was asked to protect them himself. This reversal of roles speaks volumes about the concern that is spreading among the highest echelons of power regarding this sensitive subject.

This compartmentalisation has become even more pronounced in recent years. According to a report by whistleblower Matthew Brown, released in 2024, a secret programme called Immaculate Constellation has been in place since 2017, equipped with automated systems for detecting and quarantining any data related to UFOs, preventing their normal circulation within the intelligence apparatus itself. These automatic classification mechanisms developed by the Department of War illustrate the extent of the structural obstacles that the executive branch is placing in the way of the transparency efforts being made by some members of Congress.

Towards controlled disclosure?

Faced with the systemic blockages revealed by the Grusch affair and the dysfunctions of the current system, an unprecedented response has emerged within the US Congress. The Unidentified Anomalous Phenomena Disclosure Act (UAPDA), sponsored by Senators Chuck Schumer and Mike Rounds, represents a major shift in the legislative approach to the issue of evidence concerning UAPs.

The bill establishes that all government documents concerning UAPs are considered declassifiable by default, unless there is justification to the contrary. The burden of proof has been completely reversed, as it is no longer up to the public to justify why the information should be accessible, but up to government agencies to demonstrate why it must remain classified.

The text of the bill makes extensive use of the term ‘non-human intelligence’ (NHI), a formulation that reflects the conceptual evolution of the debate at the highest levels of the US government. This terminology, used 22 times in the legislative text, reveals that the issue is no longer limited to UAPs but now explicitly encompasses the hypothesis of a non-human intelligent origin.

A revealing process of weakening

The history of this legislation reveals, however, a process of gradual erosion that perfectly illustrates the structural resistance to any form of transparency on this subject. The original 2023 version provided for the creation of an independent review committee, composed of nine experts appointed by the president and confirmed by the Senate, with a budget of $20 million. This committee would have had considerable powers, including access to all classified programmes, the power to issue subpoenas, and even the right to seize ‘recovered technologies of unknown origin’ held by private entities.

This ambitious version was opposed by a coalition led by Representative Mike Turner, then chairman of the House Intelligence Committee, and Mike Rogers, chairman of the Armed Services Committee. However, these two representatives received hundreds of thousands of dollars in contributions from defence contractors who would have been directly affected by the bill. These financial ties create an obvious structural conflict of interest with companies that would have had to surrender their ‘technologies of unknown origin’ under the original provisions of the bill.

Lockheed Martin’s opposition makes perfect sense when one considers the allegations made by Senator Harry Reid, who died in 2021. Reid claimed to have been ‘informed for decades that Lockheed had some of these recovered materials’. Despite his position as Senate Majority Leader, Reid was denied permission by the Pentagon to inspect these alleged materials. If such technologies do indeed exist at Lockheed Martin‘s facilities, the UAPDA and its power of confiscation would pose an existential threat to private control of these potentially revolutionary technologies.

At the same time, the Pentagon and the AARO have been waging their own campaign against the UAPDA. Sean Kirkpatrick, former director of the AARO, confirmed that his office opposed key provisions of the amendment, citing duplication of missions already assigned to the AARO by Congress. This opposition reveals a striking paradox. The agency responsible for investigating allegations of government cover-ups is opposing the creation of an independent oversight mechanism.

In 2024, only a few superficial elements of the UAPDA were incorporated into the NDAA, creating a simple database of documents stripped of their original substance. An independent review committee was completely removed, the right of requisition was eliminated, and it was replaced with a simple centralisation of documents relating to UAPs administered by the National Archives. The power of disclosure remained with the same agencies that had organised the cover-up for decades.

This process of weakening continues. In 2025, Schumer and Rounds again introduced the full version of the UAPDA as an amendment to the NDAA (National Defense Authorization Act, the annual military programming law defining the defence budget and policies) 2026, reintroducing the independent review committee and the right of seizure. This legislative persistence reflects the senators’ conviction that a controlled disclosure mechanism is still necessary, despite the institutional and private resistance that scuttled previous versions.

However, this institutional architecture also reveals the intrinsic limitations of any controlled disclosure. The president retains veto power over the committee’s decisions, and the most sensitive documents may be declassified up to 25 years after their creation. Disclosure therefore remains subject to the sovereign discretion of the executive branch.

This legislative development perfectly illustrates the fundamental tension at the heart of the issue of evidence in the field of UAPs. On the one hand, it institutionally recognises the legitimacy of the demand for transparency and the need to subject all government programmes to congressional oversight. On the other hand, it reveals the extent of the resistance to this basic democratic requirement.

The very intensity of this opposition raises questions. If UAPs were really nothing more than a series of misunderstandings and misinterpreted natural phenomena, the systematic opposition to their transparent study seems disproportionate. This resistance to any form of independent oversight suggests the existence of information that certain actors deem necessary to protect, whether it be classified conventional programmes, advanced technologies, or other elements relating to national security.

The repeated failure of these attempts at transparency also reveals the mechanisms by which private interests can appropriate the democratic process. The phenomenon of ‘revolving doors’ between the Pentagon and defence contractors crystallises this systemic influence. In 2024, nearly 70% of Lockheed Martin‘s lobbyists were former government officials, a situation that facilitates influence over political decisions. In such a context, the distinction between public and private interests becomes blurred, making it difficult to establish any independent oversight.

The pioneers of modern investigation

Long marginalised, the study of unidentified aerospace phenomena is now experiencing a revival marked by the emergence of ambitious initiatives led by researchers and scientific organisations around the world.

On the technological front, the Galileo (Avi Loeb, Harvard) and Skywatch (Mitch Randall) projects are developing detection systems specifically designed to test bold hypotheses, such as the existence of objects with instantaneous accelerations incompatible with current physical laws. Galileo is deploying multimodal observatories equipped with artificial intelligence to automatically identify UAPs, while Skywatch is proposing the creation of a national network of passive radars using commercial aircraft signals as a continuous calibration reference.

At the same time, organisations such as UAPx and the Scientific Coalition for UAP Studies are producing scientific analyses. The SOL Foundation, co-founded by Garry Nolan, takes a complementary approach by organising public conferences and stimulating interdisciplinary academic research, particularly in the field of analysing potentially anomalous materials.

The VASCO project (Dr. Beatriz Villarroel) offers a particularly original approach by analysing a century of astronomical photographic plates to identify unexplained transient sources. Dr. Villarroel’s team has published disturbing results revealing more than 100,000 candidate transient objects in images from the Palomar Sky Survey of the 1950s, prior to the first artificial satellites, including days with several thousand detections. Their discoveries of aligned ‘multiple transits,’ coinciding with historical events such as the observations made in Washington, D.C., in July 1952, raise fascinating questions.

By studying astronomical photographic plates dating from before the space age, the VASCO project (Dr. Beatriz Villarroel) has identified unexplained objects. On 20 October 2025, the team published a peer-reviewed study in Scientific Reports (part of the Nature portfolio) revealing more than 100,000 transients of unknown nature, observed before the launch of the first artificial satellite. The study also highlights statistically significant correlations between these transients and atmospheric nuclear tests, as well as waves of UAP observations from that period.

However, these initiatives reveal a paradox. While research is becoming more structured and professional, these projects still operate largely in silos. As suggested earlier, coordination between these teams would allow them to share protocols, standardise data formats and pool discoveries to optimise their work and promote research in all areas covered by the phenomenon.

Nevertheless, the emergence of these multiple initiatives reflects a fundamental change. For the first time in the history of this subject, scientists equipped with modern tools are methodically tackling this enigma. Whether detecting impossible trajectories, analysing historical data or crossing disciplines, these pioneers are laying the foundations for a rigorous approach that may one day finally answer the fundamental question: where is the evidence?

The appendix provides a more complete, though not exhaustive, list of current initiatives.

The proof of the mystery

The analysis of the Rubber Duck case illustrates a fascinating paradox. A scientific study can demonstrate that an observed phenomenon remains unexplained, even after thorough investigation. In 2022, the Scientific Coalition for UAP Studies (SCU) published a detailed analysis of an infrared video recorded on 23 November 2019 by an RC-26B aircraft of the Arizona National Guard over the Buenos Aires National Wildlife Refuge.

The observed object has unique characteristics. Kinematic analysis shows that it rotates regularly for about thirty minutes, following a stable and consistent trajectory, inconsistent with the behaviour of an object driven by the wind. In fact, meteorological data indicate that local winds ranged from 3 to 45 mph (5-72 km/h) depending on altitude, with a maximum of 33 mph (53 km/h) at the flight level of the National Guard aircraft. However, tangential velocity calculations show that at all recorded altitudes, the object was moving faster than the wind, which rules out the possibility of a balloon carried by atmospheric currents.

Infrared analysis reveals that the object does not appear to emit any heat of its own. According to the SCU, this impression results from both the operation of the FLIR sensor and the possibly reflective nature of the object’s surface, which would reflect the temperature of the sky or the surrounding atmosphere. This thermal behaviour is incompatible with known propulsion systems.

A methodical examination of conventional hypotheses leads to a puzzling conclusion. The latex weather balloon is ruled out because this material is transparent to infrared and would transmit the temperature of the ground. The Mylar-type metallised balloon is also rejected, as it bursts between 3,000 and 8,000 feet altitude. The drone hypothesis does not hold up either. Electric-powered models have a range well below the estimated observation time of more than forty-five minutes, while combustion-engine models produce a clearly visible infrared signature, which is absent here. Furthermore, the shape of the object does not correspond to any known type of drone. The object, estimated to be between 1 and 9 feet (30 to 275 cm) in size, retains a rigid and regular shape, with no apparent aerodynamic structure.

At the end of this analysis, SCU researchers conclude that known explanations cannot account for the observations. The object does not correspond to any identified natural phenomenon or any known propulsion technology. It therefore remains classified as an unidentified aerial phenomenon, real and worthy of scientific study.

Conclusions

The question ‘where is the evidence?’ is a legitimate one when it comes to unidentified aerospace phenomena. However, this seemingly simple requirement comes up against a complex reality. Our perceptual and conceptual tools, forged to ensure our survival, struggle to comprehend manifestations that defy our established categories. The SCU’s analysis of the Rubber Duck case illustrates this difficulty: by methodically demonstrating the inadequacy of conventional explanations, it establishes the limits of our understanding without being able to identify the observed phenomenon.

This situation calls for a revision of our approach. Rather than seeking definitive proof that would resolve the issue once and for all, the rigorous study of UAPs requires the deployment of an ecology of evidence, articulating different types of data into a coherent and evolving whole. Promising methodological avenues are emerging, from the interdisciplinary model of Vallée-Davis to technological projects such as the Skywatch passive radar networks, which would enable the establishment of a standardised and reproducible scientific approach.

This research, however, faces resistance reminiscent of the tragic history of scientific revolutions. Giordano Bruno was burned alive in 1600 for, among other things, defending the infinity of the universe. Galileo narrowly escaped the same fate under threat from the Inquisition. His contemporaries struggled to conceive that the Earth was not the centre of the universe, an idea so obvious today that it seems trivial to us.

The commitment of scientists in this still marginalised field remains essential. As Thomas Kuhn reminds us, ‘discovery begins with the recognition of anomaly’. If evidence were to confirm the existence of phenomena beyond our current understanding, this would not mean the end of the mystery, but a shift towards even more fundamental questions.

For we must bear in mind a dizzying truth. We live under the illusion of perceiving reality, when in fact we grasp only a tiny fraction of it. What we call ‘reality’ is only a subjective, tragically partial construction of what our world is.

The humility imposed by the limits of our knowledge echoes the timeless teaching of Socrates, who, more than two millennia ago, had already grasped this fundamental principle: ‘I know that I know nothing’. This recognition of our ignorance, far from being a weakness, is the very foundation of any authentic scientific endeavour. As researcher Diana W. Pasulka points out, Socrates was recognised as the most intelligent man of his time, not because he claimed to know everything, but precisely because he was aware of his ignorance. She adds that ‘this posture of openness and receptivity allows knowledge to find us, rather than locking us into our certainties’.

This observation opens up immense horizons. The universe probably holds mysteries that our instruments cannot even begin to touch upon. UAPs invite us to cultivate the fundamental curiosity that drives humanity to explore the unknown and search beyond apparent limits. It is in this quest, guided by rigour and fuelled by wonder, that the future of our understanding of the cosmos may unfold.

APPENDIX

Organisations studying the phenomenon (non-exhaustive list - please contact the editorial team if you wish to add an organisation or correct any information).

A. Government organisations

Type: Official government service. Field: Analysis of testimonies and cases reported on French territory. Layer(s): Physical + Psychological (testimonies + material evidence). Output: Published official analyses.

B. Academic organisations and university research programmes

Type: Academic research programme. Field: Automated observatories. Layer(s): Physical (continuous multimodal detection). Specificity: Artificial intelligence for automatic identification. Output: The project began publishing its first observational data in 2024, with an operational observatory continuously monitoring the sky above Harvard.

Type: Astronomical research programme. Field: Analysis of historical archives. Layer(s): Physical (transient sources on photographic plates). Specificity: Historical approach covering more than a century of data. Production: Identification of unexplained transient sources.

Type: University research institute. Field: AI detection and analysis. Layer(s): Physical + Cultural (detection + search for extraterrestrial life). Specificity: Dual terrestrial (Sky-CAM) and space (SONATE) approach. Production: Artificial intelligence analyses.

SUAPS (Society for UAP Studies)

Type: Academic society. Field: Publication and university education. Layer(s): Cultural + Psychological (academic integration). Specificity: Peer-reviewed academic journal dedicated exclusively to UAPs. Production: Limina journal and university courses.

C. Private organisations and research centres

Americans for Safe Aerospace (ASA)

Type: Civilian non-profit organisation run by former military personnel. Field: Aviation safety and witness support. Layer(s): Psychological + Cultural (confidential testimonies + legislative advocacy). Specificity: Collection of testimonies from civilian and military pilots - Over 30,000 members. Output: Centralised testimonies and legislative advocacy (e.g. Safe Airspace for Americans Act).

Type: Private research organisation. Field: Scientific field instrumentation. Layer(s): Physical (infrared, optical, magnetic and radiation sensors). Output: Peer-reviewed scientific publications.

Scientific Coalition for UAP Studies (SCU)

Type: Independent scientific organisation. Field: Analysis of complex cases. Layer(s): Physics (cross-referenced physical data). Output: Scientific analysis reports (e.g. the ‘Rubber Duck’ case).

Type: International civil research and investigation organisation. Field: Field investigation and global database. Layer(s): Physical + Psychological + Cultural (detailed testimonies + multidisciplinary analyses + historical archives). Specificity: More than 4,000 trained investigators worldwide, standardised classification system, database of more than 140,000 cases since 1969. Output: Standardised investigation reports, monthly ‘MUFON Journal’ magazine, annual international symposium.

Type: Independent non-profit civil organisation. Field: Collection and archiving of reports. Layer(s): Psychological + Cultural (testimonies + statistical analyses). Specificity: Public database of over 180,000 witness reports since 1974. Output: Centralised reports, periodic statistical analyses, 24/7 telephone reporting service.

Type: Specialised research centre. Field: Aviation safety. Layer(s): Physical + Physiological (electromagnetic effects + impacts on pilots). Specificity: Unique focus on UAP-aviation interactions. Output: Technical reports and interdisciplinary analyses.

Type: Interdisciplinary expert commission. Field: Scientific analysis of unexplained cases. Layer(s): Physical + Physiological (multiple sensors + biological effects). Specificity: Twenty or so civilian and military experts from various fields (aeronautics, radar, physics, biology, psychology). Output: Interdisciplinary scientific studies.

Type: Interdisciplinary academic foundation. Field: Academic research and public policy. Layer(s): Cultural + Psychological (societal implications + perception). Specificity: Political and philosophical approach to non-human intelligence. Output: Public conferences and academic research.

Type: Multilingual information service and private research centre. Field: Media; scientific. Layer(s): Cultural + Physical (public information + detection). Specificity: Mobile observatory. Output: Multilingual information service (Sentinel News).

Type: Technological project. Field: Passive radar detection. Layer(s): Physical (networked object detection). Specificity: Continuous automatic calibration via ADSB signals. Production: Reproducible and controlled detection system.

Type: Historical civil organisation. Field: Documentation and archives. Layer(s): Cultural + Psychological (preservation of testimonies + civil coordination). Specificity: Historical reference organisation since 1956. Production: Chronological archives and coordination of investigations.

Type: Independent researchers. Field: Academic field research. Layer(s): Physical + Psychological (instrumented data + testimonials). Specificity: Geographic focus on Long Island, peer-reviewed approach. Production: Academic publications and book ‘Nightcrawler: Eye on the Sky’.

Type: Space research programme. Field: Detection of artificial orbital objects. Layer(s): Physical (telescope network). Output: Detection methodology (ongoing evaluation).

D. Commercial companies

Type: Private company. Field: Hybrid technology-parapsychology approach. Layer(s): Physical + Psychic + Cultural (technology + consciousness phenomena + taxonomy). Specificity: Controlled landing objectives and classification of UAPs. Production: Hybrid methodology (not based on scientific standards, as of 13/10/2025).